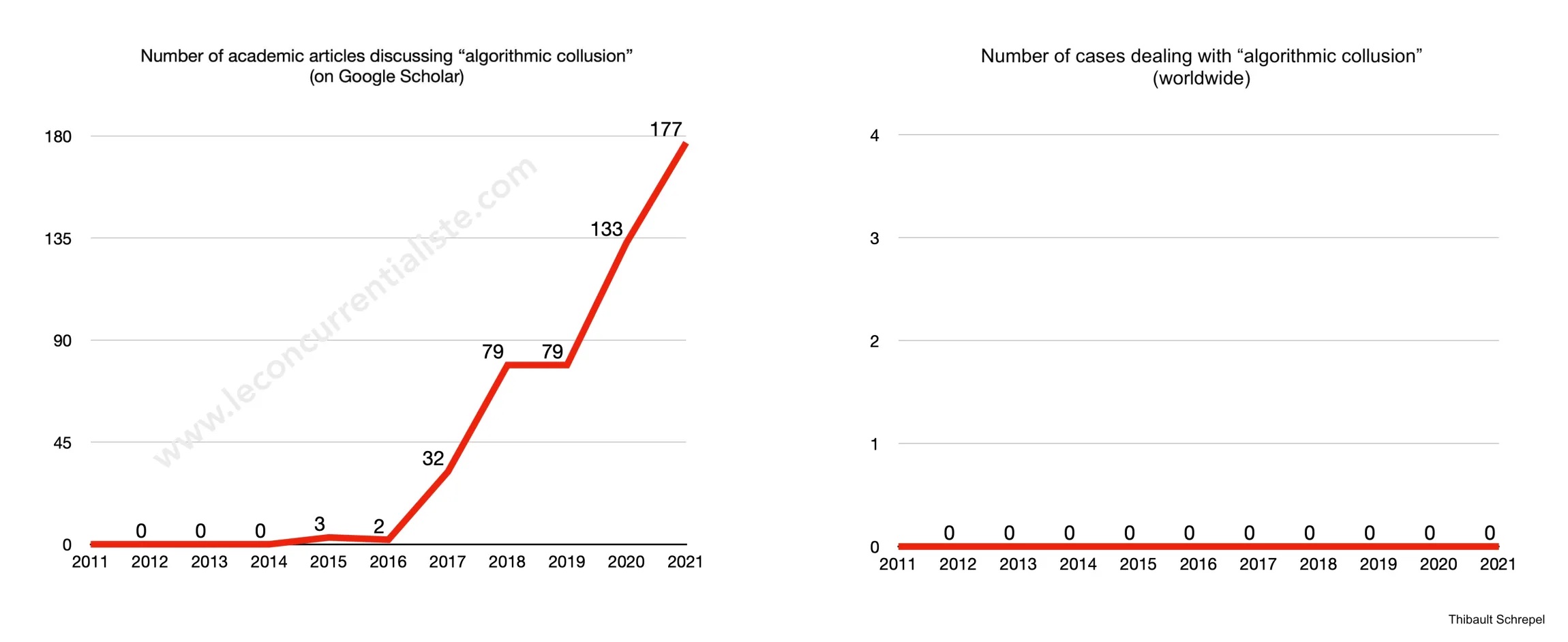

The OECD defines algorithmic collusion as the practice whereby firms use algorithms “as a facilitating factor for collusion” in order to “enable new forms of co-ordination that were not observed or even possible before.” I think that definition is too broad because it includes practices decided by humans. I’d rather define algorithmic collusion as the practice by which computers decide and implement collusion (e.g., an algorithm is instructed to maximize profit and discovers that charging the same price as competitors best achieve that goal).

The literature on the subject is abundant (see graph on the left). But even though the concept was popularized seven years ago, we are still waiting for agencies and court cases (see graph on the right). Oh, of course, I can’t exclude that I missed one or two somewhere in the world, but as far as I know, there are no cases dealing with algorithmic collusion such as I define it. Now, two possibilities: (1) algorithmic collusion is (very) uncommon, or (2) we fail to detect it. If the first scenario is confirmed, we are misspending scarce resources by focusing on this issue (and, therefore, ignoring others). To assess whether the second scenario is confirmed, we need to invest more in computational antitrust. Doing so will help detect other practices, anyway.

***

To quote this short piece: Thibault Schrepel, Algorithmic Collusion in Just One Graphic, Concurrentialiste (Mar. 5, 2022)